NATIXIANs, March brought the final chapter of Q1 2026, and we are heading into the second quarter with real momentum. Between a landmark partnership announcement, a Network Laps milestone crossed, and fresh content that digs into one of autonomy's hardest unsolved problems, March was a month worth recapping in full.

Let's get into it.

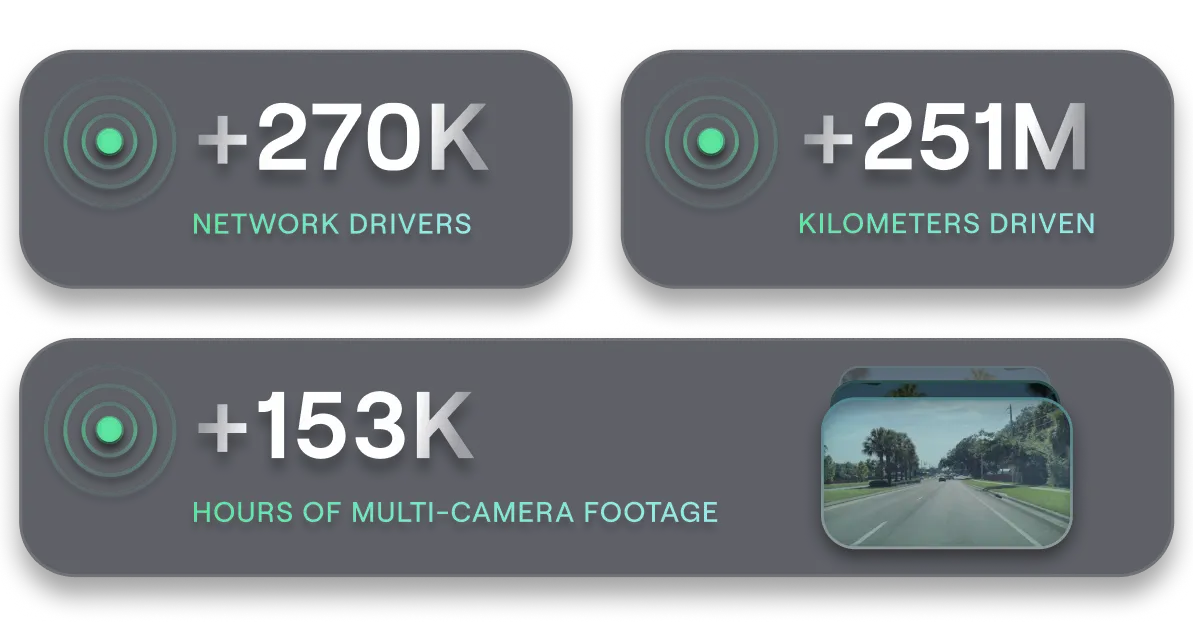

The NATIX Network wrapped Q1 in strong form. We now have over 270K registered drivers contributing to the network through the Drive& app, who have collectively mapped over 251M KMs of road. That means that we crossed the 250M KM mark, completing yet another milestone in the Network Laps!

With over 1.4B map data points detected and over 153K hours of multi-camera footage collected, the dataset's depth and diversity continue to grow in exactly the ways that Physical AI development demands.

The headline announcement in March was our partnership with MaprGo, a spatial AI platform that converts large-scale visual data from the physical world into structured machine intelligence. MaprGo works with global automotive OEMs, EV manufacturers, digital mapping platforms, and Fortune 500 companies, and its platform is designed to extract exactly the signals that autonomous systems need: road geometry, lane markings, infrastructure assets, traffic behavior, and environmental context, all continuously updated as the real world changes.

Through this collaboration, NATIX's global multi-camera data flows directly into MaprGo's spatial AI engine, enabling the generation of OpenSCENARIO datasets, simulation-ready environments, and data-driven scenarios for autonomous driving stacks, robotics platforms, and digital twin systems. Instead of relying on limited survey fleets or manually designed scenarios, developers can now build realistic environments from real-world footage at a global scale. This is especially critical for rare events and edge cases, where real-world diversity is the only true source of ground truth. Follow the link to read full Spatial AI Intelligence for Physical AI article.

March also brought a new article tackling one of the most important and underappreciated challenges in autonomous driving: edge cases and long tail scenarios. In this article, CEO Alireza explains why the hardest part of achieving full autonomy is no longer getting a system to perform well on clean highways; it is making that system reliable when the world stops behaving predictably.

The article covers how World Foundation Models (WFMs) can turn a single real-world edge case into a wide range of simulated variations during training, and why real-world multi-camera data becomes even more critical during testing and validation, where you need the exact scenario, same geometry, same timing, same behavior. It also explains how NATIX uses Vision-Language Models (VLMs) to make the long tail searchable, surfacing rare interactions from large volumes of ordinary footage that would otherwise take weeks of manual review to find. For anyone following the autonomy space, it is a worthwhile read Edge Case Long tail Scenarions for Autonomous Driving.

The 250M KM milestone is one worth pausing on. The Network Laps remains the biggest reward campaign in DePIN, and every milestone crossed is a direct reflection of hundreds of thousands of contributors building something that genuinely matters. 250M KMs of globally sourced, multi-camera road data is not a vanity number; it is the kind of dataset that changes what Physical AI can do. This community built it, and this milestone belongs to every driver in the network.

To complement the partnership announcement, we held a special AMA with Naresh Shetty, CEO of MaprGo. The session covered how the collaboration works in practice, included a live demo showing the integration between NATIX data and the MaprGo platform, and opened the floor for community questions.

It was a great opportunity to go beyond the press release and show what spatial AI actually looks like when applied to real-world multi-camera footage, and the community came with sharp questions. Watch the full MeprGO x NATIX AMA here.

We also released an AI-generated podcast episode based on one of our previously published articles, making the content more accessible for those who prefer audio over reading. This is part of our ongoing effort to make our content more engaging, meet the community across different formats, and keep the ideas we are putting out in the Physical AI and DePIN space as easy to engage with as possible. Give it a listen From VLMs to WFMs here.

Q2 is now open, and we have a lot of work ahead of us. The partnerships and data capabilities we are building are laying the groundwork for announcements that will reinforce NATIX's position at the center of the Physical AI ecosystem. The demand for structured, diverse, real-world multi-camera data is accelerating, and we are building the network that meets it at scale. Stay tuned.

As always, make sure to follow our Twitter @NATIXNetwork to stay up-to-date with our announcements and releases.