In recent years, autonomous vehicles have advanced significantly. From building pricey data collection cars and sloppy models trained in empty parking lots, we now have Teslas with FSD, driverless Waymos, and an increasing number of new AV initiatives. But with each step we unlock, achieving full autonomy is getting harder.

Autonomous driving does not fail when a car drives on a clean highway on a sunny day. It fails when the world gets weird, fast. A kid runs out from behind a parked van, a scooter cuts across a lane, and construction works temporarily block the road. Suddenly, the “easy” parts of driving are not where the point is.

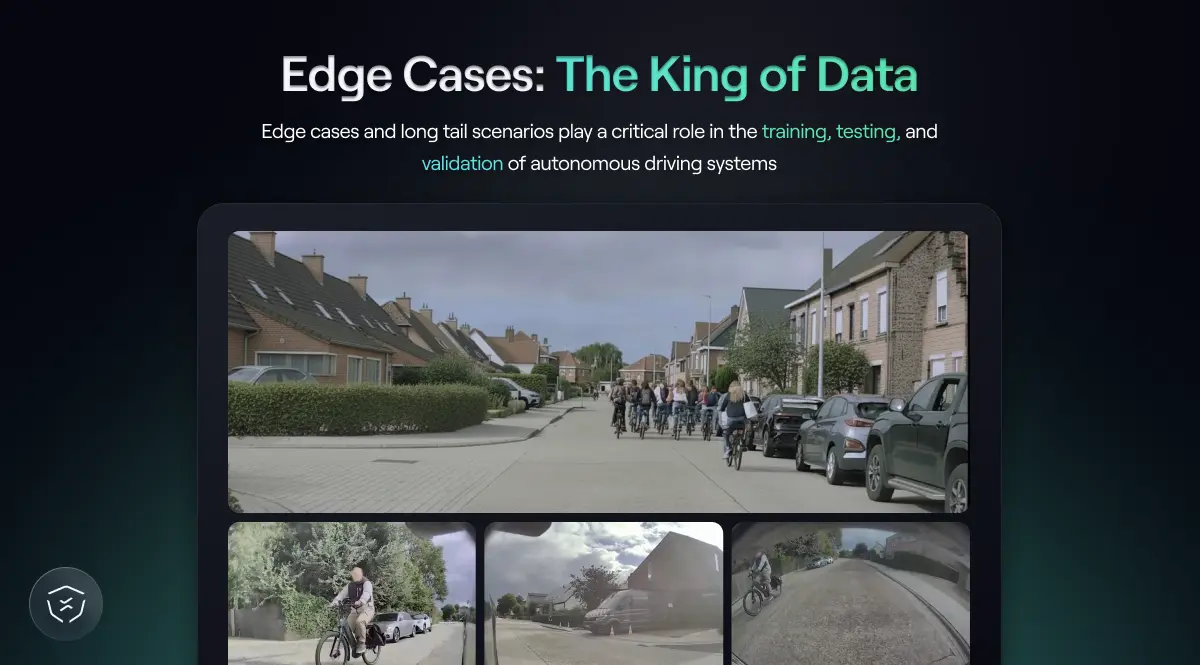

That is why the most important problem in autonomy is proving the system stays safe in the rare ones – Edge Cases and Long Tail Scenarios. That’s exactly what autonomous driving is dying for to reach a point where, as Uber CEO Dara Khosrowshahi described it, “driving will look a lot like horseback riding."

Edge cases are single, rare events that fall outside the normal, common scenarios you encounter in everyday driving. These are often the "breaking point" where an autonomous car's perception or logic fails because it has never encountered that exact situation before. These can be the result of an object on the road, extreme weather conditions, or unique pedestrian behavior. Edge cases are "solvable" individually: Engineers can identify a specific edge case, like a truck blocking the road, and add it to the training data.

Long-tail scenarios are the other side of the same coin. If an Edge Case describes a specific event, the Long Tail is the statistical problem created by an infinite number of those events. The long tail is not a neat list of “rare events,” it’s more like rare combinations. Rain plus glare plus a weird intersection plus an aggressive merge. Night plus fog plus worn lane markings plus a pedestrian dressed head-to-toe in black. Each factor is common, but the combo is not. The Long Tail is "unsolvable" by brute force because there are an infinite number of rare scenarios, and you can never train for all of them. The "Long Tail Problem" is the realization that even if you solve 1,000 edge cases, there will always be a 1,001st that is just as dangerous. Edge Cases and Long Tail scenarios are one of the biggest hurdles autonomous driving faces today due to their uncommon nature, making them infinitely harder to capture and collect.

Think about restaurants. Most meals are predictable and boring in a good way, but if the kitchen only practices making the top five dishes, the first time a customer asks for something slightly unusual, everything falls apart. It’s like the difference between memorizing recipes vs. cooking intuitively. Driving is the same, except the consequences of “falling apart” are far worse, and that is where autonomy gets tested.

When building an autonomous vehicle, Edge Cases and Long Tail scenarios are the holy grail of data. They are the difference between a model that performs well in demos and a model that survives reality.

Let’s start with training.

An autonomous driving system learns from patterns. What it sees often, it learns to handle well. Lane keeping, neat turns, and smooth roundabouts are all part of the common, boring day-to-day driving. But that’s not how the real world works.

Edge Cases and Long Tail scenarios matter in training because they expand the model’s understanding of how the world can behave. The broader the exposure, the stronger the intuition the model builds. But this also creates three structural problems:

That makes brute-force collection inefficient. You can drive millions of kilometers and still miss the specific combination that causes a failure. This is exactly where modern techniques like World Foundation Models (WFMs) change the equation.

World Models learn how scenes evolve over time. Instead of memorizing what happened in a specific clip, they learn the structure of motion, interaction, and physics behind it. That means a real edge case can become a seed. From one rare event, a world model can generate many “realities”: different timing, lighting, trajectories, or new agent behaviors. Feeding a WFM one edge case can create a wide range of simulated futures, all grounded in physical realism. In other words, the long tail stops being something you passively wait for and becomes something you can actively explore. The goal is to make the model more capable by expanding what it has seen. That is where World Foundation Models shine. You can stress the system in ways that would be unsafe or impractical to recreate on public roads. Training benefits from this amplification because the objective is robustness.

Testing and validation are a different beast.

When you test and validate an autonomous driving module, you want to know how it behaves in a scenario that happened exactly as it happened in the real world: The exact geometry, timing, and pedestrian behavior. You want to replay reality, not a modified version of it. Edge cases become reference points. They become benchmarks and stress tests that every future version of the system must pass.

If training asks, “Can the model handle more?” Testing and validation ask, “Can the model handle this?” That is why real-world edge cases play an even bigger role in testing and validation. They anchor the system to reality.

This is also where data quality becomes critical. For testing and validation, multi-camera context, accurate trajectories, and clean scenario extraction matter enormously. If the reference scenario is flawed or incomplete, your validation is flawed. In other words, World Models help you explore the long tail during training. Real-world edge cases help you measure it during testing and validation. Both are essential. They just serve different purposes.

So if Edge Cases and Long Tail scenarios are so critical for training, testing and validation, why isn’t every autonomous driving team swimming in them?

As important as Edge Cases and Long Tail scenarios are, collecting them at scale is one of the hardest operational problems in autonomous driving. The real world does not cooperate. As Sarfraz Maredia, Global Head of Autonomous Mobility and Delivery at Uber, recently noted, “Handling long-tail and unpredictable driving scenarios is one of the defining challenges of autonomy,” underscoring that systems still need to understand the world.

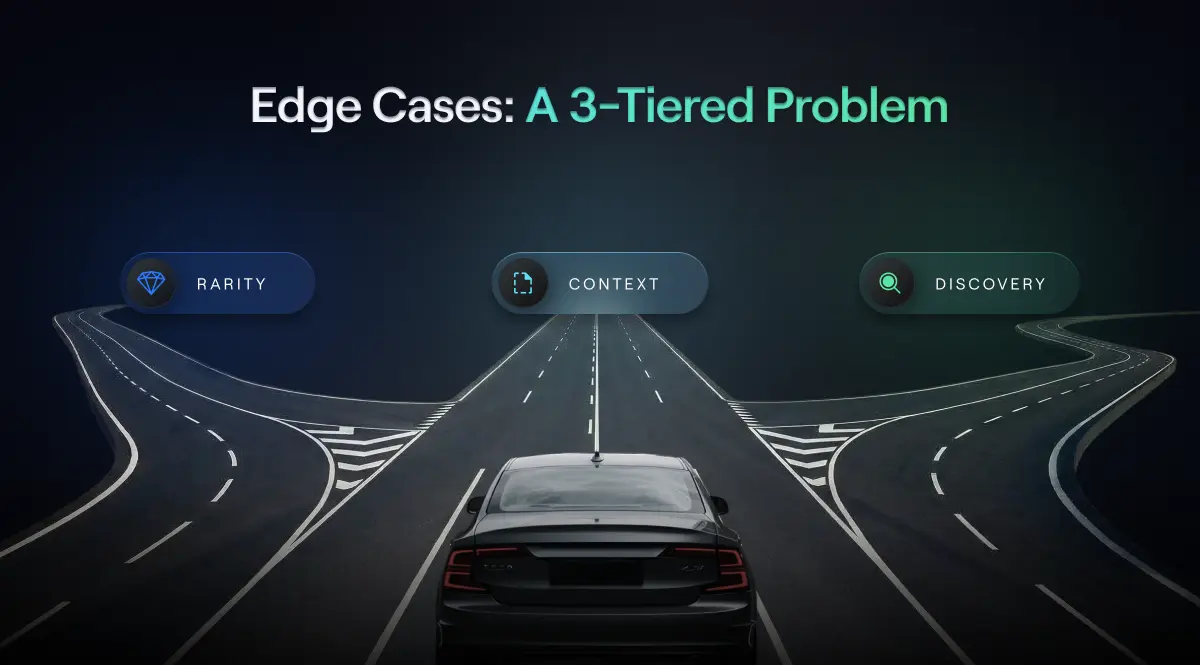

First, there is the problem of rarity. Edge cases are rare by definition because they’re random and appear in unpredictable combinations. You can’t schedule them or ask for them, and you can’t send a fleet out and say, “Please encounter three complex near-miss scenarios before lunch.”

Even large autonomous driving fleets run into a basic math problem. Most miles are uneventful: Highways, routine intersections, predictable behavior. The more efficient your data collection becomes, the more average your dataset tends to look. And average data does not prepare a system for extreme variation.

There is also a second layer to the problem: context.

Modern autonomous vehicles rely on multi-camera systems. Rare events often unfold across different angles. A cyclist approaching from the side, a pedestrian emerging from behind a wall, a vehicle emerging from a blind spot. If your dataset is limited to front-facing video, you may capture the moment something went wrong, but miss the lead-up that explains why. Without full spatial context, you are not seeing the whole story. And if the story is incomplete, your training, testing, and validation are too.

Then there is the discovery problem.

A company may have thousands of hours of visual driving data, but no efficient way to surface the specific interactions that matter. Edge Cases and Long Tail scenarios do not sit in a separate folder labeled “rare stuff.” Manual review does not scale, basic metadata filtering only gets you so far, and you often do not know what you are looking for until after a model fails.

So the challenge is not just collecting more data. It is collecting the right data across geographies, weather conditions, road types, and driving cultures, then being able to find and structure the rare events inside it. It requires more than mileage. It requires structure, context, and the ability to surface the rare moments that actually matter.

If collecting edge cases at scale is fundamentally a discovery problem rather than a mileage problem, then the solution has to combine structured data capture with intelligent search. Driving more is not enough. You need a way to surface the rare interactions hidden inside ordinary footage and turn them into something usable for training, testing, and validation.

NATIX approaches this through three connected steps: capture, discovery, and conversion.

The network collects multi-camera footage, along with GPS, IMU, and contextual trip metadata, such as weather, road type, and time of day. This matters because rare events rarely unfold cleanly in a single forward-facing frame. Without multi-camera context, an edge case is often reduced to a fragment of the full story.

Once captured, we tackle the challenge of discovery. Edge cases are buried inside thousands of uneventful kilometers. This is where Vision-Language Models (VLMs) become essential. Instead of relying only on metadata filters, NATIX uses VLMs to index and rank multi-camera footage based on both visual content and contextual signals. Engineers can query the dataset in natural language and retrieve scenarios that match complex descriptions, such as:

The model connects language to visual patterns across time and different cameras, making the long tail searchable. What used to require weeks of manual review can now be surfaced in seconds, allowing autonomy teams to actively hunt for rare scenarios rather than waiting for failures to expose them.

This approach aligns with what many leaders in the field have pointed out about the future of AI systems. Yann LeCun recently claimed that “The next major phase of artificial intelligence will centre on systems that can truly understand and model the physical world, rather than just process language.” That understanding does not come from isolated frames or synthetic abstractions alone. It comes from large-scale, structured exposure to real-world interactions, especially the ones that are hard to find.

Finally, the solution has to combine structured data capture with intelligent search. A rare clip has limited value unless it can be integrated into training pipelines or validation workflows. NATIX converts selected edge cases into structured scenario representations with trajectories, lane context, and environmental geometry. This makes them usable inside established simulation and testing tools, so they can serve two distinct purposes: as seeds for World Foundation Models during training, and as high-fidelity reference scenarios during validation.

In practical terms, the long tail stops being abstract and becomes operational. Multi-camera edge cases are captured with context, surfaced through VLM-based search, and structured for reuse inside real training and validation workflows. Instead of driving endlessly in hopes of encountering the right combination of events, teams can focus on extracting and amplifying the rare moments that actually determine safety.

Autonomous driving will not be won on clean highways in good weather. It will be won in the uncomfortable margins, in the rare combinations of events that reveal whether a system truly understands the world or is simply repeating what it has seen most often.

Edge cases and long tail scenarios are not corner problems. They are the proving ground. They shape how models are trained, how they are stress-tested, and how confidence is earned before a system is deployed. Training uses them to expand intuition. Validation uses them to anchor reality. Both depend on access to the right data.

The real bottleneck is no longer model architecture alone. It is the ability to find, structure, and operationalize the rare moments that matter. As World Foundation Models make it possible to amplify edge cases during training, the importance of high-quality, multi-camera real-world data for validation only grows.

In the end, autonomy is not about solving the average case. It is about building systems that remain stable when the world stops behaving predictably. The teams that master the long tail will be the ones that move from impressive demos to truly reliable autonomy.