.webp)

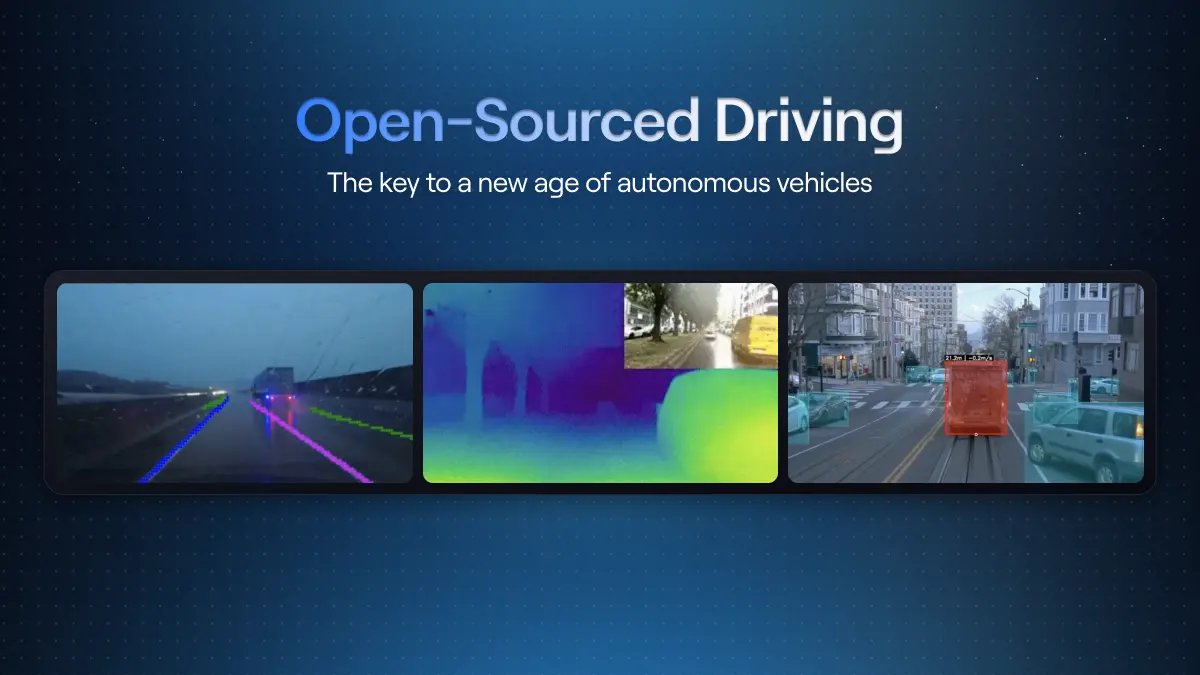

Open-source autonomy is entering a new phase. The software is mature, the frameworks exist, and serious players are involved. What has been missing is large-scale, real-world multi-camera data to train the next generation of end-to-end autonomous driving models and the world models that power them.

With NATIX joining the Autoware Foundation, that missing piece begins to fall into place. NATIX is becoming a Premium member of the foundation, joining names like AMD, AWS, Arm, Red Hat, TIER IV, Capgemini, and more, and will provide multi-camera driving data to help build Autoware’s open-source end-to-end (E2E) autonomous driving model, including the world model components that support it. Our data will feed training, simulation, and validation for the entire open-source AV community.

Autoware is the world’s leading open-source software stack for autonomous driving. Founded by TIER IV, a prominent name in the autonomous driving industry, it also includes major contributors from across the autonomous driving ecosystem, with participation from companies such as Arm, Red Hat, TIER IV, Capgemini, and others who are helping push open-source autonomy forward. The Autoware Foundation is a global nonprofit organization that brings together OEMs, Tier 1s, robotics companies, and researchers around a shared goal: a production-grade, open autonomy stack that does not have to be rebuilt from scratch in every company.

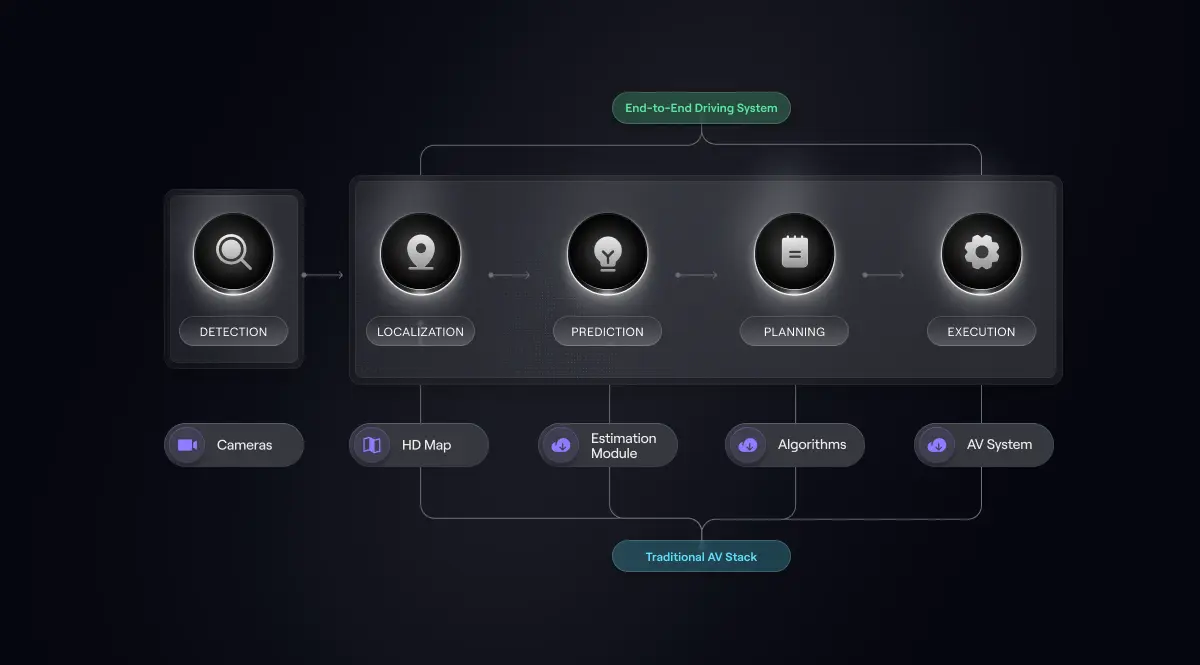

Built on the Robot Operating System (ROS), it runs on a wide range of platforms, from passenger cars and trucks to shuttles, delivery robots, and industrial vehicles. The foundation provides all the core functions needed to operate a self-driving vehicle:

Now, Autoware is taking the next logical step in autonomy: end-to-end (E2E) AI model.

The move toward open-source autonomy is practical. Physical AI systems improve through exposure to data, experimentation, and iteration. When development happens behind closed doors, progress tends to move slowly because each company must build its own stack, collect its own datasets, and solve the same problems independently.

Open-source ecosystems change that dynamic. They allow researchers, robotics companies, and automotive manufacturers to build on a shared foundation rather than starting from scratch. Improvements made by one contributor can immediately benefit the entire community.

This model has already reshaped software development, cloud infrastructure, and machine learning frameworks. It is now beginning to reshape autonomy.

This open approach is already taking shape across the ecosystem. In addition to joining the Autoware Foundation, NATIX is collaborating with Valeo on an open-source World Foundation Model designed to help autonomous systems learn how real-world environments evolve over time. By contributing real-world driving data to both efforts, NATIX helps strengthen the foundations of open autonomy, from perception and driving policies to the simulation environments that train them.

Together, these initiatives reflect a broader shift toward open, collaborative development of the core technologies that will power the next generation of Physical AI.

Autonomous driving systems were traditionally built as chains of specialized modules. One component handled perception, another localization, another prediction, and another planning and control. Each part of the system was engineered and tuned independently. While this modular approach enabled early progress, it also introduced limitations. Systems became difficult to adapt to new environments, hard to scale across vehicle platforms, and fragile when encountering rare edge cases.

The industry is now shifting toward data-centric, end-to-end (E2E) models. Instead of stitching together many separate components, a single AI system learns the full driving task directly from data, mapping multi-camera input to driving actions under a shared training objective. Driving is increasingly treated not as a sequence of hand-coded rules, but as a learned behavior.

This shift reflects a deeper realization. Humans do not become drivers simply by memorizing traffic rules. We rely on an intuitive understanding of motion, risk, and social behavior built over years of real-world experience. Physical AI systems must learn those same patterns from data.

World models play an important role in this new paradigm. They learn how real-world scenes evolve over time and allow autonomous systems to train in realistic virtual environments generated from real driving footage. Instead of manually designing every simulation scenario, world models can generate new variations of real-world situations while preserving the underlying physical dynamics.

All of this depends on large-scale visual driving data. Cameras capture how the world actually behaves, and those recordings become the experience from which autonomous systems learn.

Autoware has laid out a roadmap for gradually introducing these end-to-end capabilities into its open-source autonomy stack. Multi-camera data from NATIX will support this transition by providing the real-world training signals needed to develop, test, and validate the next generation of open autonomous driving systems.

.webp)

Training an open-source E2E stack is fundamentally a data problem. The model needs to see many cities, many driving cultures, many road designs, and many rare events. It requires diverse, multi-camera video that captures what happens around the vehicle, not just in front of it. NATIX provides exactly this.

As the industry increasingly shifts toward World Foundation Models and end-to-end learning, the ability to collect and structure real-world driving data at scale becomes one of the defining advantages in autonomy development.

The NATIX VX360 network collects multi-camera driving footage across multiple continents, road types, and conditions. The result is a growing dataset of global, multi-camera driving behavior that reflects how the real world works, from everyday traffic to unusual edge cases.

Autoware plans to use NATIX data to:

By providing multi-camera data at this scale, NATIX helps ensure that open-source E2E models can keep pace with, and in some cases rival, their closed, proprietary counterparts. Access to high-quality real-world training data is one of the largest barriers in modern autonomy, and opening that access to the broader community has the potential to dramatically accelerate development across the entire field.

"NATIX brings a large and diverse open-source data set which covers many long-tail edge case scenarios. This allows us to find the data that matters most on roads across the world to build safe, introspectable, and generalizable End-to-End AI models. We're excited to begin our collaboration with NATIX and to share our technology outputs with the wider automotive and autonomous driving ecosystem," said Muhammad Zain Khawaja, Managing Director of Product at the Autoware Foundation.

The community behind Autoware is large, global, and fast-growing, with contributions from companies ranging from early-stage robotics startups to established OEMs and Tier-1 suppliers. By becoming a premium member of the Autoware Foundation, NATIX supports the growth of open, accessible, and production-ready autonomous driving technology for some of the biggest names in the autonomous driving industry.

The broader autonomous driving industry is moving in the same direction: end-to-end learning on top of powerful world models that can simulate millions of situations before deployment. If open-source autonomy is to remain relevant in that world, it needs access to serious data and training workflows.

“Open-source autonomy needs access to serious real-world data as the industry moves toward end-to-end AI,” says Alireza Ghods, CEO and co-founder of NATIX. “By joining the Autoware Foundation and contributing global multi-camera driving data, we are helping the community train and validate the next generation of autonomous driving systems.”

Joining the Autoware Foundation and supplying our data is a natural step for NATIX. Our mission is to turn real-world driving into useful, accessible fuel for Physical AI. Helping Autoware build an E2E driving stack and its underlying world model extends that mission into the heart of the open-source ecosystem.

Autoware provides the open-source autonomy stack. NATIX provides the global multi-camera data that allows it to learn, simulate, and improve at scale. Together, this partnership helps push open-source autonomy into its next stage, where end-to-end models, world models, and large-scale real-world data begin to converge.

For NATIX, it represents another step toward our broader mission: turning real-world driving into accessible fuel for Physical AI and helping accelerate the development of autonomous systems across the entire Physical AI ecosystem.